Many-Shot In-Context Learning

All the details around teaching LLMs by giving examples!

July 5, 2024 · 2 min read

All the details around teaching LLMs by giving examples!

Clustering tokens to make ColBERT more efficient and usable with Vector DBs!

Combining small LLMs to outperform larger monolithic LLMs!

Using Metadata Filters to Improve Recall in RAG!

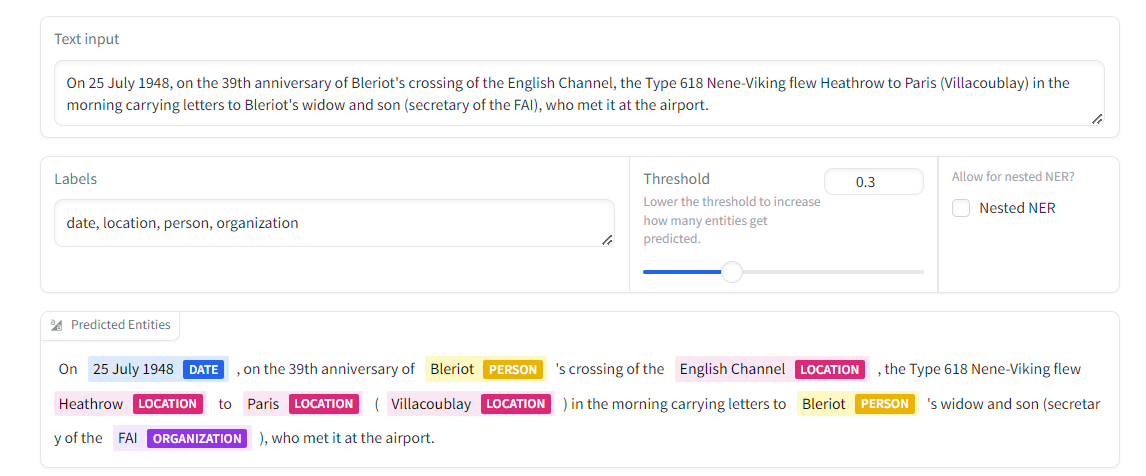

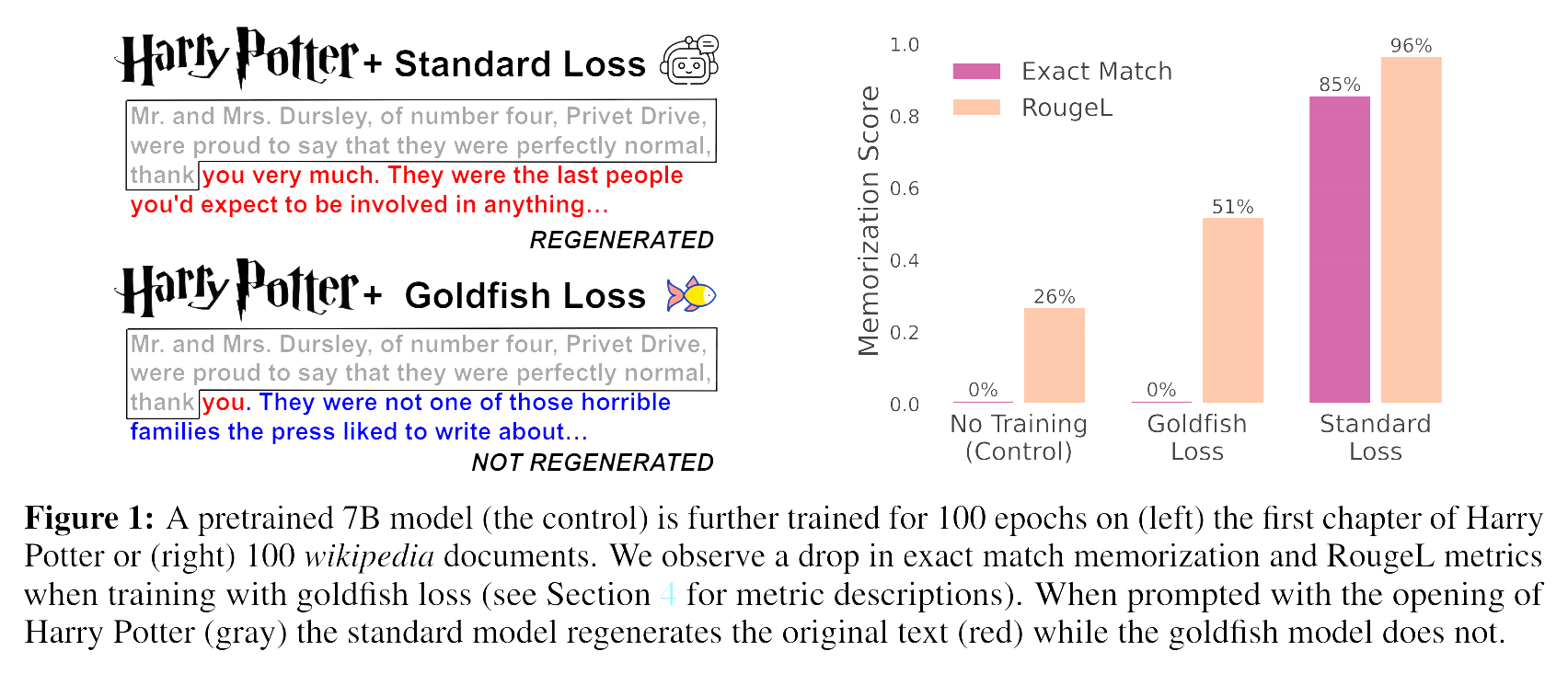

Training LLMs without making them memorize!