Jina AI Multimodal Embeddings with Weaviate

1.25.26, 1.26.11 and v1.27.5Weaviate's integration with Jina AI's APIs allows you to access their models' capabilities directly from Weaviate.

Configure a Weaviate vector index to use a Jina AI embedding model, and Weaviate will generate embeddings for various operations using the specified model and your Jina AI API key. This feature is called the vectorizer.

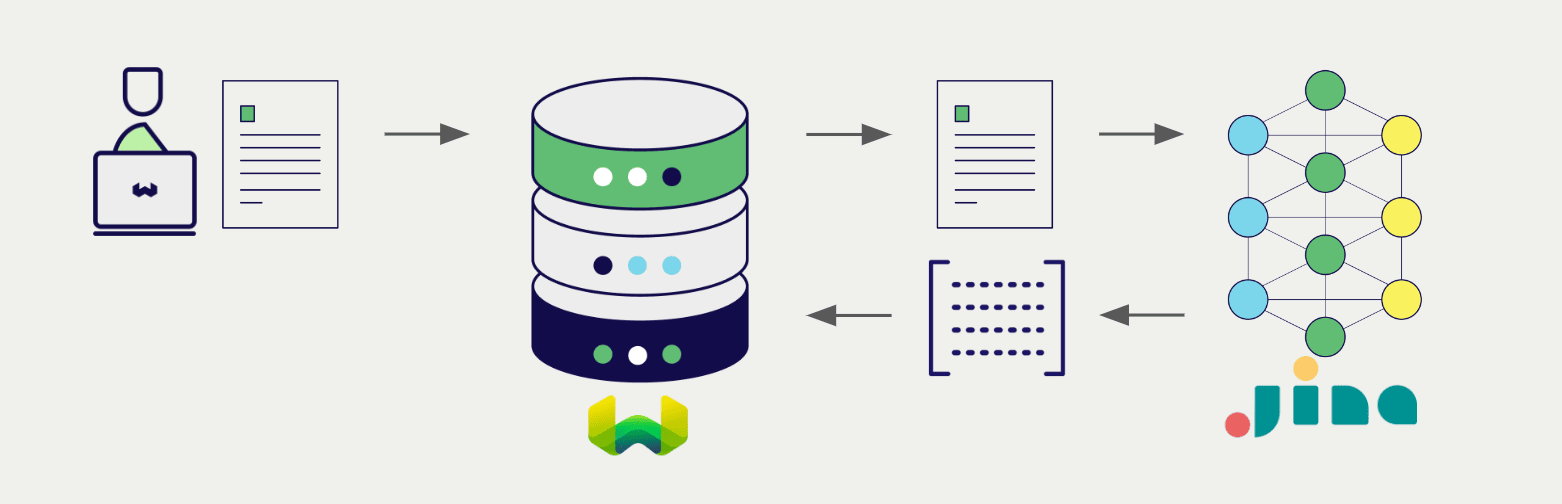

At import time, Weaviate generates multimodal object embeddings and saves them into the index. For vector and hybrid search operations, Weaviate converts queries of one or more modalities into embeddings. Multimodal search operations are also supported.

Requirements

Weaviate configuration

Your Weaviate instance must be configured with the Jina AI multimodal vectorizer integration (multi2vec-jinaai) module.

For Weaviate Cloud (WCD) users

This integration is enabled by default on Weaviate Cloud (WCD) serverless instances.

For self-hosted users

- Check the cluster metadata to verify if the module is enabled.

- Follow the how-to configure modules guide to enable the module in Weaviate.

API credentials

You must provide a valid Jina AI API key to Weaviate for this integration. Go to Jina AI to sign up and obtain an API key.

Provide the API key to Weaviate using one of the following methods:

- Set the

JINAAI_APIKEYenvironment variable that is available to Weaviate. - Provide the API key at runtime, as shown in the examples below.

- Python API v4

- JS/TS API v3

import weaviate

from weaviate.classes.init import Auth

import os

# Recommended: save sensitive data as environment variables

jinaai_key = os.getenv("JINAAI_APIKEY")

headers = {

"X-JinaAI-Api-Key": jinaai_key,

}

client = weaviate.connect_to_weaviate_cloud(

cluster_url=weaviate_url, # `weaviate_url`: your Weaviate URL

auth_credentials=Auth.api_key(weaviate_key), # `weaviate_key`: your Weaviate API key

headers=headers

)

# Work with Weaviate

client.close()

import weaviate from 'weaviate-client'

const jinaaiApiKey = process.env.JINAAI_APIKEY || ''; // Replace with your inference API key

const client = await weaviate.connectToWeaviateCloud(

'WEAVIATE_INSTANCE_URL', // Replace with your instance URL

{

authCredentials: new weaviate.ApiKey('WEAVIATE_INSTANCE_APIKEY'),

headers: {

'X-JinaAI-Api-Key': jinaaiApiKey,

}

}

)

// Work with Weaviate

client.close()

Configure the vectorizer

Configure a Weaviate index as follows to use a Jina AI embedding model:

- Python API v4

- JS/TS API v3

from weaviate.classes.config import Configure, DataType, Multi2VecField, Property

client.collections.create(

"DemoCollection",

properties=[

Property(name="title", data_type=DataType.TEXT),

Property(name="poster", data_type=DataType.BLOB),

],

vectorizer_config=[

Configure.NamedVectors.multi2vec_jinaai(

name="title_vector",

# Define the fields to be used for the vectorization - using image_fields, text_fields

image_fields=[

Multi2VecField(name="poster", weight=0.9)

],

text_fields=[

Multi2VecField(name="title", weight=0.1)

],

)

],

# Additional parameters not shown

)

await client.collections.create({

name: 'DemoCollection',

properties: [

{

name: 'title',

dataType: 'text' as const,

},

{

name: 'poster',

dataType: 'blob' as const,

},

],

vectorizers: [

weaviate.configure.vectorizer.multi2VecJinaAI({

name: 'title_vector',

imageFields: [{

name: "poster",

weight: 0.9

}],

textFields: [{

name: "title",

weight: 0.1

}]

},

),

],

// Additional parameters not shown

});

Select a model

You can specify one of the available models for the vectorizer to use, as shown in the following configuration example.

- Python API v4

- JS/TS API v3

from weaviate.classes.config import Configure, DataType, Multi2VecField, Property

client.collections.create(

"DemoCollection",

properties=[

Property(name="title", data_type=DataType.TEXT),

Property(name="poster", data_type=DataType.BLOB),

],

vectorizer_config=[

Configure.NamedVectors.multi2vec_jinaai(

name="title_vector",

# Define the fields to be used for the vectorization - using image_fields, text_fields

image_fields=[

Multi2VecField(name="poster", weight=0.9)

],

text_fields=[

Multi2VecField(name="title", weight=0.1)

],

model="jina-clip-v2",

)

],

)

await client.collections.create({

name: 'DemoCollection',

properties: [

{

name: 'title',

dataType: 'text' as const,

},

{

name: 'poster',

dataType: 'blob' as const,

},

],

vectorizers: [

weaviate.configure.vectorizer.multi2VecJinaAI({

name: 'title_vector',

imageFields: [{

name: "poster",

weight: 0.9

}],

textFields: [{

name: "title",

weight: 0.1

}],

model: "jina-clip-v2"

},

),

],

// Additional parameters not shown

});

You can specify one of the available models for Weaviate to use. The default model is used if no model is specified.

Vectorization behavior

Weaviate follows the collection configuration and a set of predetermined rules to vectorize objects.

Unless specified otherwise in the collection definition, the default behavior is to:

- Only vectorize properties that use the

textortext[]data type (unless skipped) - Sort properties in alphabetical (a-z) order before concatenating values

- If

vectorizePropertyNameistrue(falseby default) prepend the property name to each property value - Join the (prepended) property values with spaces

- Prepend the class name (unless

vectorizeClassNameisfalse) - Convert the produced string to lowercase

Vectorizer parameters

The following examples show how to configure Jina AI-specific options.

- Python API v4

- JS/TS API v3

from weaviate.classes.config import Configure, DataType, Multi2VecField, Property

client.collections.create(

"DemoCollection",

properties=[

Property(name="title", data_type=DataType.TEXT),

Property(name="poster", data_type=DataType.BLOB),

],

vectorizer_config=[

Configure.NamedVectors.multi2vec_jinaai(

name="title_vector",

# Define the fields to be used for the vectorization - using image_fields, text_fields

image_fields=[

Multi2VecField(name="poster", weight=0.9)

],

text_fields=[

Multi2VecField(name="title", weight=0.1)

],

# Further options

# model="jina-clip-v2",

# dimensions=512, # Only applicable for some models (e.g. `jina-clip-v2`)

)

],

# Additional parameters not shown

)

await client.collections.create({

name: 'DemoCollection',

properties: [

{

name: 'title',

dataType: 'text' as const,

},

{

name: 'poster',

dataType: 'blob' as const,

},

],

vectorizers: [

weaviate.configure.vectorizer.multi2VecJinaAI({

name: 'title_vector',

imageFields: [{

name: "poster",

weight: 0.9

}],

textFields: [{

name: "title",

weight: 0.1

}],

// Further options

// model:"jina-clip-v2",

},

),

],

// Additional parameters not shown

});

Vectorizer parameters

model: The model name.dimensions: The number of dimensions for the model.- Note that not all models support this parameter.

Data import

After configuring the vectorizer, import data into Weaviate. Weaviate generates embeddings for text objects using the specified model.

- Python API v4

- JS/TS API v3

collection = client.collections.get("DemoCollection")

with collection.batch.fixed_size(batch_size=200) as batch:

for src_obj in source_objects:

poster_b64 = url_to_base64(src_obj["poster_path"])

weaviate_obj = {

"title": src_obj["title"],

"poster": poster_b64 # Add the image in base64 encoding

}

# The model provider integration will automatically vectorize the object

batch.add_object(

properties=weaviate_obj,

# vector=vector # Optionally provide a pre-obtained vector

)

const collectionName = 'DemoCollection'

const myCollection = client.collections.use(collectionName)

let multiModalObjects = []

for (let mmSrcObject of mmSrcObjects) {

multiModalObjects.push({

title: mmSrcObject.title,

poster: mmSrcObject.poster, // Add the image in base64 encoding

});

}

// The model provider integration will automatically vectorize the object

const mmInsertResponse = await myCollection.data.insertMany(dataObjects);

console.log(mmInsertResponse);

If you already have a compatible model vector available, you can provide it directly to Weaviate. This can be useful if you have already generated embeddings using the same model and want to use them in Weaviate, such as when migrating data from another system.

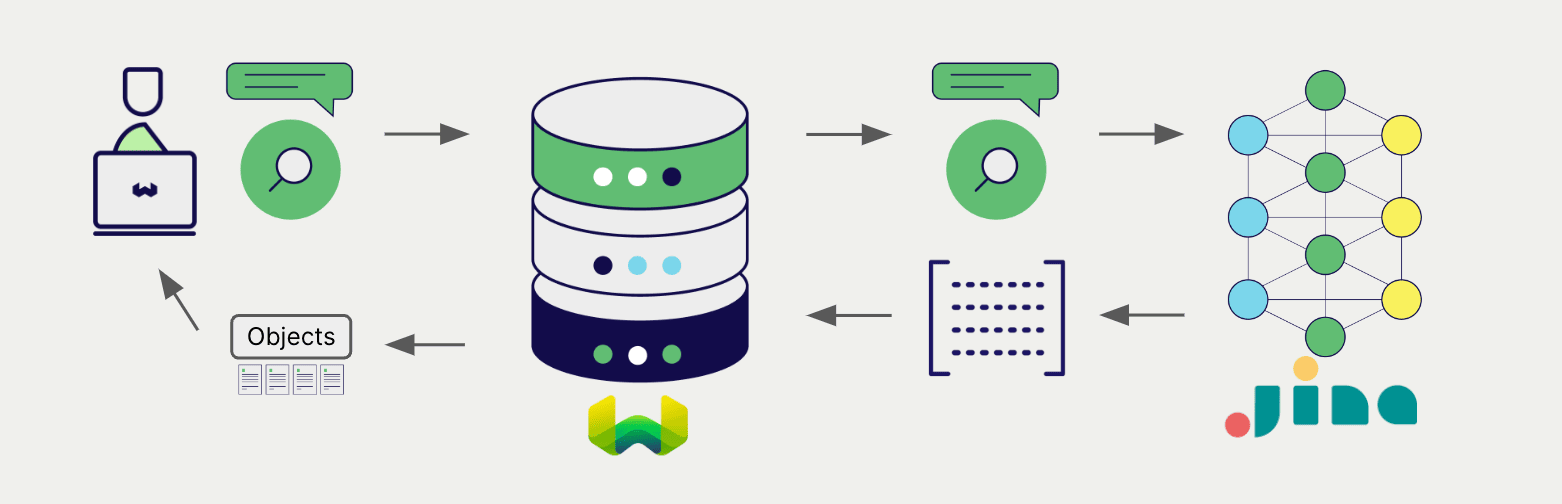

Searches

Once the vectorizer is configured, Weaviate will perform vector and hybrid search operations using the specified Jina AI model.

Vector (near text) search

When you perform a vector search, Weaviate converts the text query into an embedding using the specified model and returns the most similar objects from the database.

The query below returns the n most similar objects from the database, set by limit.

- Python API v4

- JS/TS API v3

collection = client.collections.get("DemoCollection")

response = collection.query.near_text(

query="A holiday film", # The model provider integration will automatically vectorize the query

limit=2

)

for obj in response.objects:

print(obj.properties["title"])

const collectionName = 'DemoCollection'

const myCollection = client.collections.use(collectionName)

let result;

result = await myCollection.query.nearText(

'A holiday film', // The model provider integration will automatically vectorize the query

{

limit: 2,

}

)

console.log(JSON.stringify(result.objects, null, 2));

Hybrid search

A hybrid search performs a vector search and a keyword (BM25) search, before combining the results to return the best matching objects from the database.

When you perform a hybrid search, Weaviate converts the text query into an embedding using the specified model and returns the best scoring objects from the database.

The query below returns the n best scoring objects from the database, set by limit.

- Python API v4

- JS/TS API v3

collection = client.collections.get("DemoCollection")

response = collection.query.hybrid(

query="A holiday film", # The model provider integration will automatically vectorize the query

limit=2

)

for obj in response.objects:

print(obj.properties["title"])

const collectionName = 'DemoCollection'

const myCollection = client.collections.use(collectionName)

result = await myCollection.query.hybrid(

'A holiday film', // The model provider integration will automatically vectorize the query

{

limit: 2,

}

)

console.log(JSON.stringify(result.objects, null, 2));

Vector (near media) search

When you perform a media search such as a near image search, Weaviate converts the query into an embedding using the specified model and returns the most similar objects from the database.

To perform a near media search such as near image search, convert the media query into a base64 string and pass it to the search query.

The query below returns the n most similar objects to the input image from the database, set by limit.

- Python API v4

- JS/TS API v3

def url_to_base64(url):

import requests

import base64

image_response = requests.get(url)

content = image_response.content

return base64.b64encode(content).decode("utf-8")

collection = client.collections.get("DemoCollection")

query_b64 = url_to_base64(src_img_path)

response = collection.query.near_image(

near_image=query_b64,

limit=2,

return_properties=["title", "release_date", "tmdb_id", "poster"] # To include the poster property in the response (`blob` properties are not returned by default)

)

for obj in response.objects:

print(obj.properties["title"])

const base64String = 'SOME_BASE_64_REPRESENTATION';

result = await myCollection.query.nearImage(

base64String, // The model provider integration will automatically vectorize the query

{

limit: 2,

}

)

console.log(JSON.stringify(result.objects, null, 2));

References

Available models

jina-clip-v2- This model is a multilingual, multimodal model using Matryoshka Representation Learning.

- It will accept a

dimensionsparameter, which can be any integer between (and including) 64 and 1024. The default value is 1024.

jina-clip-v1- This model will always return a 768-dimensional embedding.

Further resources

Other integrations

- Jina AI text embedding models + Weaviate.

- Jina AI ColBERT embedding models + Weaviate.

- Jina AI reranker models + Weaviate.

Code examples

Once the integrations are configured at the collection, the data management and search operations in Weaviate work identically to any other collection. See the following model-agnostic examples:

- The how-to: manage data guides show how to perform data operations (i.e. create, update, delete).

- The how-to: search guides show how to perform search operations (i.e. vector, keyword, hybrid) as well as retrieval augmented generation.

External resources

- Jina AI Embeddings API documentation

Questions and feedback

If you have any questions or feedback, let us know in the user forum.