Google Generative AI with Weaviate

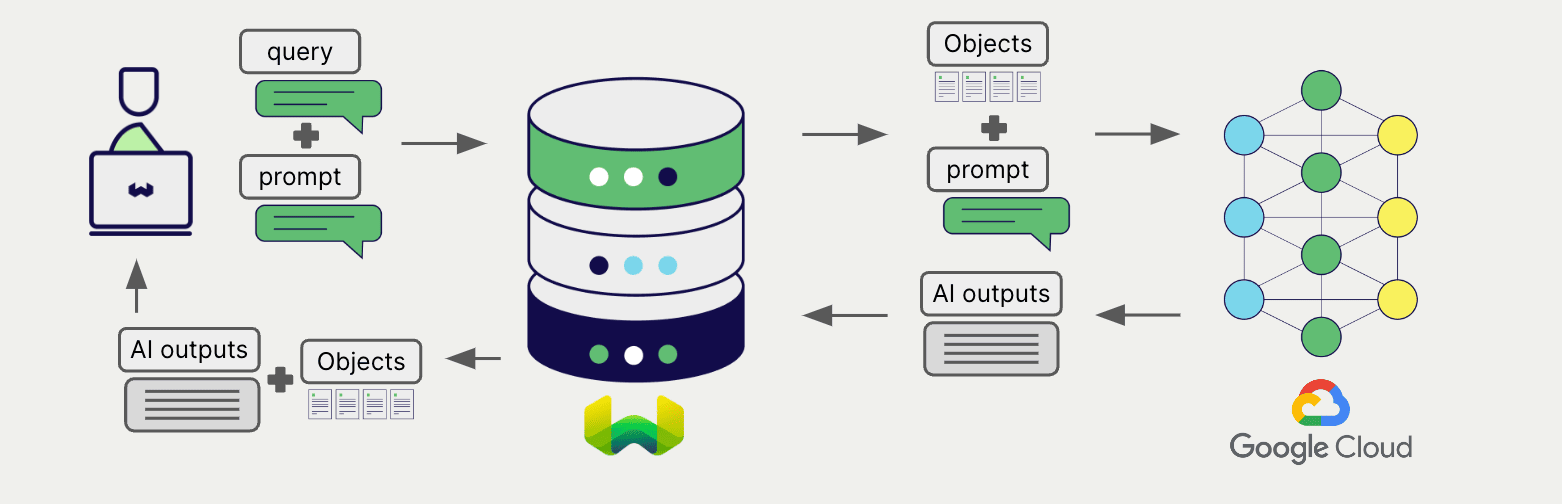

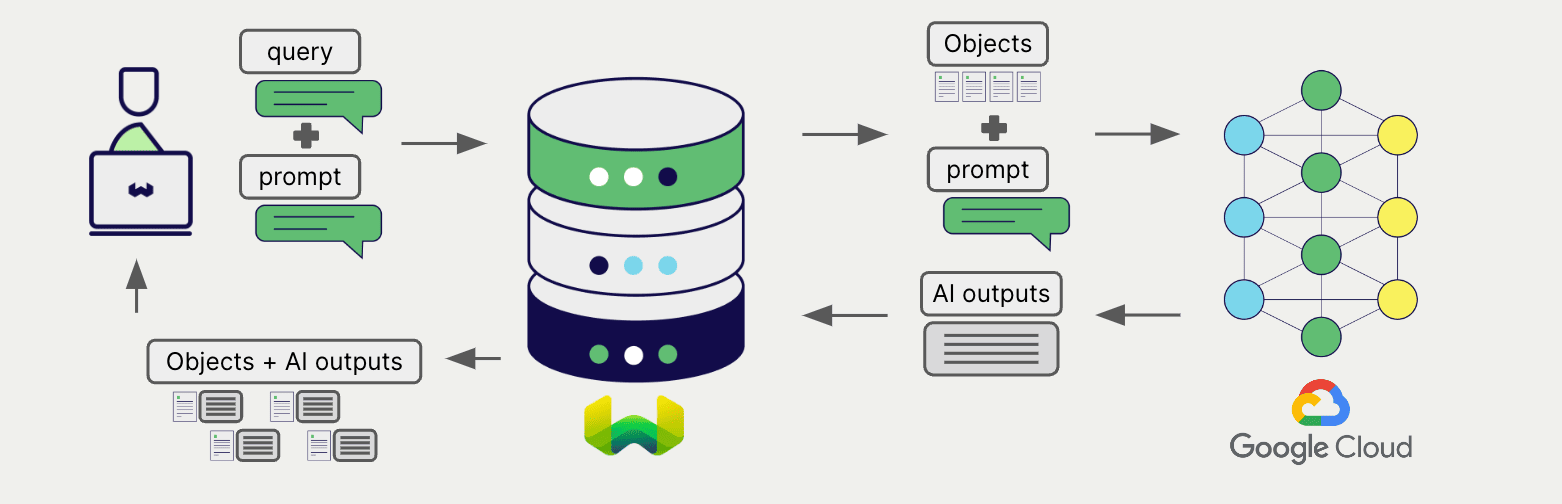

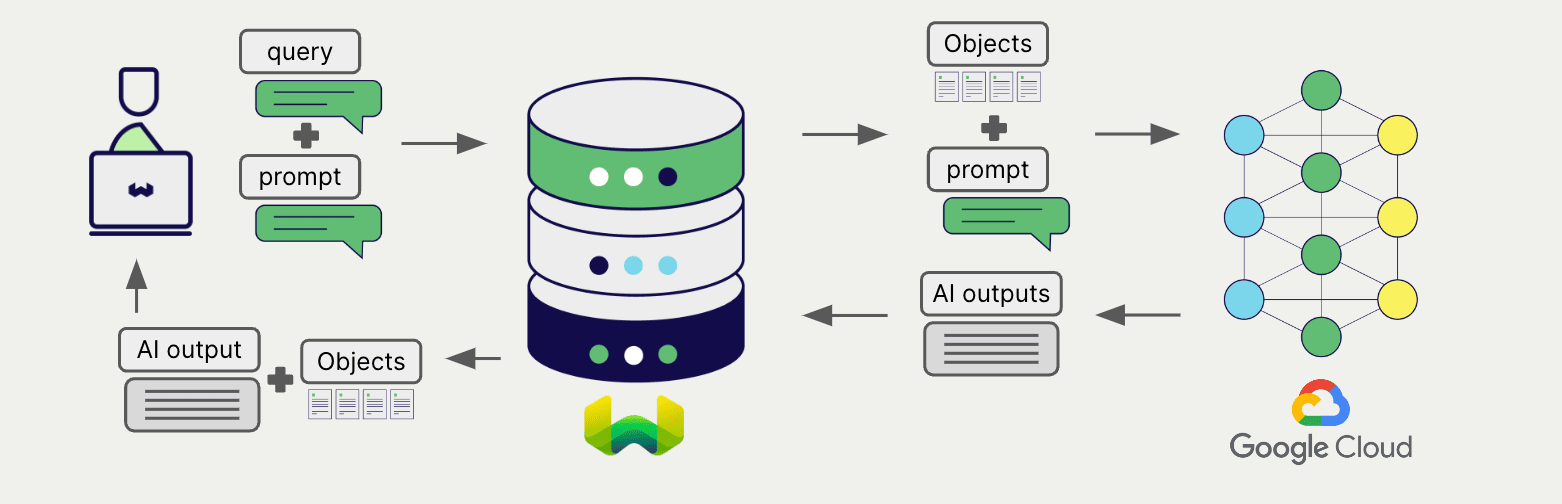

Weaviate's integration with Google AI Studio and Google Vertex AI APIs allows you to access their models' capabilities directly from Weaviate.

Configure a Weaviate collection to use a generative AI model with Google. Weaviate will perform retrieval augmented generation (RAG) using the specified model and your Google API key.

More specifically, Weaviate will perform a search, retrieve the most relevant objects, and then pass them to the Google generative model to generate outputs.

At the time of writing (November 2023), AI Studio is not available in all regions. See this page for the latest information.

Requirements

Weaviate configuration

Your Weaviate instance must be configured with the Google generative AI integration (generative-google) module.

generative-google was called generative-palm in Weaviate versions prior to v1.27.

For Weaviate Cloud (WCD) users

This integration is enabled by default on Weaviate Cloud (WCD) serverless instances.

For self-hosted users

- Check the cluster metadata to verify if the module is enabled.

- Follow the how-to configure modules guide to enable the module in Weaviate.

API credentials

You must provide valid API credentials to Weaviate for the appropriate integration.

AI Studio

Go to Google AI Studio to sign up and obtain an API key.

Vertex AI

This is called an access token in Google Cloud.

Automatic token generation

From Weaviate versions 1.24.16, 1.25.3 and 1.26.

This feature is not available on Weaviate cloud instances.

You can save your Google Vertex AI credentials and have Weaviate generate the necessary tokens for you. This enables use of IAM service accounts in private deployments that can hold Google credentials.

To do so:

- Set

USE_GOOGLE_AUTHenvironment variable totrue. - Have the credentials available in one of the following locations.

Once appropriate credentials are found, Weaviate uses them to generate an access token and authenticates itself against Vertex AI. Upon token expiry, Weaviate generates a replacement access token.

In a containerized environment, you can mount the credentials file to the container. For example, you can mount the credentials file to the /etc/weaviate/ directory and set the GOOGLE_APPLICATION_CREDENTIALS environment variable to /etc/weaviate/google_credentials.json.

Search locations for Google Vertex AI credentials

Once USE_GOOGLE_AUTH is set to true, Weaviate will look for credentials in the following places, preferring the first location found:

- A JSON file whose path is specified by the

GOOGLE_APPLICATION_CREDENTIALSenvironment variable. For workload identity federation, refer to this link on how to generate the JSON configuration file for on-prem/non-Google cloud platforms. - A JSON file in a location known to the

gcloudcommand-line tool. On Windows, this is%APPDATA%/gcloud/application_default_credentials.json. On other systems,$HOME/.config/gcloud/application_default_credentials.json. - On Google App Engine standard first generation runtimes (<= Go 1.9) it uses the appengine.AccessToken function.

- On Google Compute Engine, Google App Engine standard second generation runtimes (>= Go 1.11), and Google App Engine flexible environment, it fetches credentials from the metadata server.

Manual token retrieval

This is called an access token in Google Cloud.

If you have the Google Cloud CLI tool installed and set up, you can view your token by running the following command:

gcloud auth print-access-token

By default, Google Cloud's OAuth 2.0 access tokens have a lifetime of 1 hour. You can create tokens that last up to 12 hours. To create longer lasting tokens, follow the instructions in the Google Cloud IAM Guide.

Since the OAuth token is only valid for a limited time, you must periodically replace the token with a new one. After you generate the new token, you have to re-instantiate your Weaviate client to use it.

You can update the OAuth token manually, but manual updates may not be appropriate for your use case.

You can also automate the OAth token update. Weaviate does not control the OAth token update procedure. However, here are some automation options:

With Google Cloud CLI

If you are using the Google Cloud CLI, write a script to periodically update the token and extract the results.

Python code to extract the token looks like this:

client = re_instantiate_weaviate()

This is the re_instantiate_weaviate function:

import subprocess

import weaviate

def refresh_token() -> str:

result = subprocess.run(["gcloud", "auth", "print-access-token"], capture_output=True, text=True)

if result.returncode != 0:

print(f"Error refreshing token: {result.stderr}")

return None

return result.stdout.strip()

def re_instantiate_weaviate() -> weaviate.Client:

token = refresh_token()

client = weaviate.Client(

url = "https://WEAVIATE_INSTANCE_URL", # Replace WEAVIATE_INSTANCE_URL with the URL

additional_headers = {

"X-Goog-Vertex-Api-Key": token,

}

)

return client

# Run this every ~60 minutes

client = re_instantiate_weaviate()

With google-auth

Another way is through Google's own authentication library google-auth.

See the links to google-auth in Python and Node.js libraries.

You can, then, periodically the refresh function (see Python docs) to obtain a renewed token, and re-instantiate the Weaviate client.

For example, you could periodically run:

client = re_instantiate_weaviate()

Where re_instantiate_weaviate is something like:

from google.auth.transport.requests import Request

from google.oauth2.service_account import Credentials

import weaviate

import os

def get_credentials() -> Credentials:

credentials = Credentials.from_service_account_file(

"path/to/your/service-account.json",

scopes=[

"https://www.googleapis.com/auth/generative-language",

"https://www.googleapis.com/auth/cloud-platform",

],

)

request = Request()

credentials.refresh(request)

return credentials

def re_instantiate_weaviate() -> weaviate.Client:

from weaviate.classes.init import Auth

weaviate_api_key = os.environ["WEAVIATE_API_KEY"]

credentials = get_credentials()

token = credentials.token

client = weaviate.connect_to_weaviate_cloud( # e.g. if you use the Weaviate Cloud Service

cluster_url="https://WEAVIATE_INSTANCE_URL", # Replace WEAVIATE_INSTANCE_URL with the URL

auth_credentials=Auth.api_key(weaviate_api_key), # Replace with your Weaviate Cloud key

headers={

"X-Goog-Vertex-Api-Key": token,

},

)

return client

# Run this every ~60 minutes

client = re_instantiate_weaviate()

The service account key shown above can be generated by following this guide.

Provide the API key

Provide the API key to Weaviate at runtime, as shown in the examples below.

Note the separate headers that are available for AI Studio and Vertex AI users.

API key headers

From v1.27.7, v1.26.12 and v1.25.27, X-Goog-Vertex-Api-Key and X-Goog-Studio-Api-Key headers are supported for Vertex AI users and AI Studio respectively. We recommend these headers for highest compatibility.

Consider X-Google-Vertex-Api-Key, X-Google-Studio-Api-Key, X-Google-Api-Key and X-PaLM-Api-Key deprecated.

- Python API v4

- JS/TS API v3

import weaviate

from weaviate.classes.init import Auth

import os

# Recommended: save sensitive data as environment variables

vertex_key = os.getenv("VERTEX_APIKEY")

studio_key = os.getenv("STUDIO_APIKEY")

headers = {

"X-Goog-Vertex-Api-Key": vertex_key,

"X-Goog-Studio-Api-Key": studio_key,

}

client = weaviate.connect_to_weaviate_cloud(

cluster_url=weaviate_url, # `weaviate_url`: your Weaviate URL

auth_credentials=Auth.api_key(weaviate_key), # `weaviate_key`: your Weaviate API key

headers=headers

)

# Work with Weaviate

client.close()

import weaviate from 'weaviate-client'

const vertexApiKey = process.env.VERTEX_APIKEY || ''; // Replace with your inference API key

const studioApiKey = process.env.STUDIO_APIKEY || ''; // Replace with your inference API key

const client = await weaviate.connectToWeaviateCloud(

'WEAVIATE_INSTANCE_URL', // Replace with your instance URL

{

authCredentials: new weaviate.ApiKey('WEAVIATE_INSTANCE_APIKEY'),

headers: {

'X-Vertex-Api-Key': vertexApiKey,

'X-Studio-Api-Key': studioApiKey,

}

}

)

// Work with Weaviate

client.close()

Configure collection

A collection's generative model integration configuration is mutable from v1.25.23, v1.26.8 and v1.27.1. See this section for details on how to update the collection configuration.

Configure a Weaviate index as follows to use a Google generative AI model as follows:

Note that the required parameters differ between Vertex AI and AI Studio.

You can specify one of the available models for Weaviate to use. The default model is used if no model is specified.

Vertex AI

Vertex AI users must provide the Google Cloud project ID in the collection configuration.

- Python API v4

- JS/TS API v3

from weaviate.classes.config import Configure

client.collections.create(

"DemoCollection",

generative_config=Configure.Generative.google(

project_id="<google-cloud-project-id>", # Required for Vertex AI

model_id="gemini-1.0-pro"

)

# Additional parameters not shown

)

await client.collections.create({

name: 'DemoCollection',

generative: weaviate.configure.generative.google({

projectId: '<google-cloud-project-id>', // Required for Vertex AI

modelId: 'gemini-1.0-pro'

}),

// Additional parameters not shown

});

AI Studio

- Python API v4

- JS/TS API v3

from weaviate.classes.config import Configure

client.collections.create(

"DemoCollection",

generative_config=Configure.Generative.google(

model_id="gemini-pro"

)

# Additional parameters not shown

)

await client.collections.create({

name: 'DemoCollection',

generative: weaviate.configure.generative.google({

modelId: 'gemini-pro',

}),

// Additional parameters not shown

});

Generative parameters

Configure the following generative parameters to customize the model behavior.

- Python API v4

- JS/TS API v3

from weaviate.classes.config import Configure

client.collections.create(

"DemoCollection",

generative_config=Configure.Generative.google(

# project_id="<google-cloud-project-id>", # Required for Vertex AI

# model_id="<google-model-id>",

# api_endpoint="<google-api-endpoint>",

# temperature=0.7,

# top_k=5,

# top_p=0.9,

# vectorize_collection_name=False,

)

# Additional parameters not shown

)

await client.collections.create({

name: 'DemoCollection',

generative: weaviate.configure.generative.google({

projectId: '<google-cloud-project-id>', // Required for Vertex AI

modelId: '<google-model-id>',

apiEndpoint: '<google-api-endpoint>',

temperature: 0.7,

topK: 5,

topP: 0.9,

}),

// Additional parameters not shown

});

Select a model at runtime

Aside from setting the default model provider when creating the collection, you can also override it at query time.

- Python API v4

- JS/TS Client v3

from weaviate.classes.config import Configure

from weaviate.classes.generate import GenerativeConfig

collection = client.collections.get("DemoCollection")

response = collection.generate.near_text(

query="A holiday film",

limit=2,

grouped_task="Write a tweet promoting these two movies",

generative_provider=GenerativeConfig.google(

# # These parameters are optional

# project_id="<google-cloud-project-id>", # Required for Vertex AI

# model_id="<google-model-id>",

# api_endpoint="<google-api-endpoint>",

# temperature=0.7,

# top_k=5,

# top_p=0.9,

# vectorize_collection_name=False,

),

# Additional parameters not shown

)

import { generativeParameters } from 'weaviate-client';

let response;

const myCollection = client.collections.use("DemoCollection");

response = await myCollection.generate.nearText("A holiday film", {

groupedTask: "Write a tweet promoting these two movies",

config: generativeParameters.google({

// These parameters are optional

// projectId: '<google-cloud-project-id>', // Required for Vertex AI

// modelId: '<google-model-id>',

// apiEndpoint: '<google-api-endpoint>',

// temperature: 0.7,

// topK: 5,

// topP: 0.9,

}),

}, {

limit: 2,

}

// Additional parameters not shown

);

Retrieval augmented generation

After configuring the generative AI integration, perform RAG operations, either with the single prompt or grouped task method.

Single prompt

To generate text for each object in the search results, use the single prompt method.

The example below generates outputs for each of the n search results, where n is specified by the limit parameter.

When creating a single prompt query, use braces {} to interpolate the object properties you want Weaviate to pass on to the language model. For example, to pass on the object's title property, include {title} in the query.

- Python API v4

- JS/TS API v3

collection = client.collections.get("DemoCollection")

response = collection.generate.near_text(

query="A holiday film", # The model provider integration will automatically vectorize the query

single_prompt="Translate this into French: {title}",

limit=2

)

for obj in response.objects:

print(obj.properties["title"])

print(f"Generated output: {obj.generated}") # Note that the generated output is per object

let response;

const myCollection = client.collections.use("DemoCollection");

let myCollection = client.collections.get('DemoCollection');

const singlePromptResults = await myCollection.generate.nearText('A holiday film', {

singlePrompt: `Translate this into French: {title}`,

}, {

limit: 2,

});

for (const obj of singlePromptResults.objects) {

console.log(obj.properties['title']);

console.log(`Generated output: ${obj.generative?.text}`); // Note that the generated output is per object

}

Grouped task

To generate one text for the entire set of search results, use the grouped task method.

In other words, when you have n search results, the generative model generates one output for the entire group.

- Python API v4

- JS/TS API v3

collection = client.collections.get("DemoCollection")

response = collection.generate.near_text(

query="A holiday film", # The model provider integration will automatically vectorize the query

grouped_task="Write a fun tweet to promote readers to check out these films.",

limit=2

)

print(f"Generated output: {response.generated}") # Note that the generated output is per query

for obj in response.objects:

print(obj.properties["title"])

let response;

const myCollection = client.collections.use("DemoCollection");

let myCollection = client.collections.get('DemoCollection');

const groupedTaskResults = await myCollection.generate.nearText('A holiday film', {

groupedTask: `Write a fun tweet to promote readers to check out these films.`,

}, {

limit: 2,

});

console.log(`Generated output: ${groupedTaskResults.generative?.text}`); // Note that the generated output is per query

for (const obj of groupedTaskResults.objects) {

console.log(obj.properties['title']);

}

RAG with images

You can also supply images as a part of the input when performing retrieval augmented generation in both single prompts and grouped tasks.

- Python API v4

- JS/TS API v3

import base64

import requests

from weaviate.classes.generate import GenerativeConfig, GenerativeParameters

src_img_path = "https://upload.wikimedia.org/wikipedia/commons/thumb/b/b0/Winter_forest_silver.jpg/960px-Winter_forest_silver.jpg"

base64_image = base64.b64encode(requests.get(src_img_path).content).decode('utf-8')

prompt = GenerativeParameters.grouped_task(

prompt="Which movie is closest to the image in terms of atmosphere",

images=[base64_image], # A list of base64 encoded strings of the image bytes

# image_properties=["img"], # Properties containing images in Weaviate

)

jeopardy = client.collections.get("DemoCollection")

response = jeopardy.generate.near_text(

query="Movies",

limit=5,

grouped_task=prompt,

generative_provider=GenerativeConfig.google(

max_tokens=1000

),

)

# Print the source property and the generated response

for o in response.objects:

print(f"Title property: {o.properties['title']}")

print(f"Grouped task result: {response.generative.text}")

import { generativeParameters } from 'weaviate-client';

function arrayBufferToBase64(buffer: ArrayBuffer): string {

const bytes = new Uint8Array(buffer);

let binary = '';

const chunkSize = 1024; // Process in chunks to avoid call stack issues

for (let i = 0; i < bytes.length; i += chunkSize) {

const chunk = bytes.slice(i, Math.min(i + chunkSize, bytes.length));

binary += String.fromCharCode.apply(null, Array.from(chunk));

}

return btoa(binary);

}

let response;

const myCollection = client.collections.use("DemoCollection");

const srcImgPath = "https://upload.wikimedia.org/wikipedia/commons/thumb/b/b0/Winter_forest_silver.jpg/960px-Winter_forest_silver.jpg"

const responseImg = await fetch(srcImgPath);

const image = await responseImg.arrayBuffer();

const base64String = arrayBufferToBase64(image);

const prompt = {

prompt: "Which movie is closest to the image in terms of atmosphere",

images: [base64String], // A list of base64 encoded strings of the image bytes

// imageProperties: ["img"], // Properties containing images in Weaviate

}

response = await myCollection.generate.nearText("Movies", {

groupedTask: prompt,

config: generativeParameters.google({

maxTokens: 1000,

}),

}, {

limit: 5,

})

// Print the source property and the generated response

for (const item of response.objects) {

console.log("Title property:", item.properties['title'])

}

console.log("Grouped task result:", response.generative?.text)

References

Available models

Vertex AI:

chat-bison(default)chat-bison-32k(from Weaviatev1.24.9)chat-bison@002(from Weaviatev1.24.9)chat-bison-32k@002(from Weaviatev1.24.9)chat-bison@001(from Weaviatev1.24.9)gemini-1.5-pro-preview-0514(from Weaviatev1.25.1)gemini-1.5-pro-preview-0409(from Weaviatev1.25.1)gemini-1.5-flash-preview-0514(from Weaviatev1.25.1)gemini-1.0-pro-002(from Weaviatev1.25.1)gemini-1.0-pro-001(from Weaviatev1.25.1)gemini-1.0-pro(from Weaviatev1.25.1)

AI Studio:

chat-bison-001(default)gemini-pro

Further resources

Other integrations

Code examples

Once the integrations are configured at the collection, the data management and search operations in Weaviate work identically to any other collection. See the following model-agnostic examples:

- The how-to: manage data guides show how to perform data operations (i.e. create, update, delete).

- The how-to: search guides show how to perform search operations (i.e. vector, keyword, hybrid) as well as retrieval augmented generation.

References

Questions and feedback

If you have any questions or feedback, let us know in the user forum.