Locally Hosted CLIP Embeddings + Weaviate

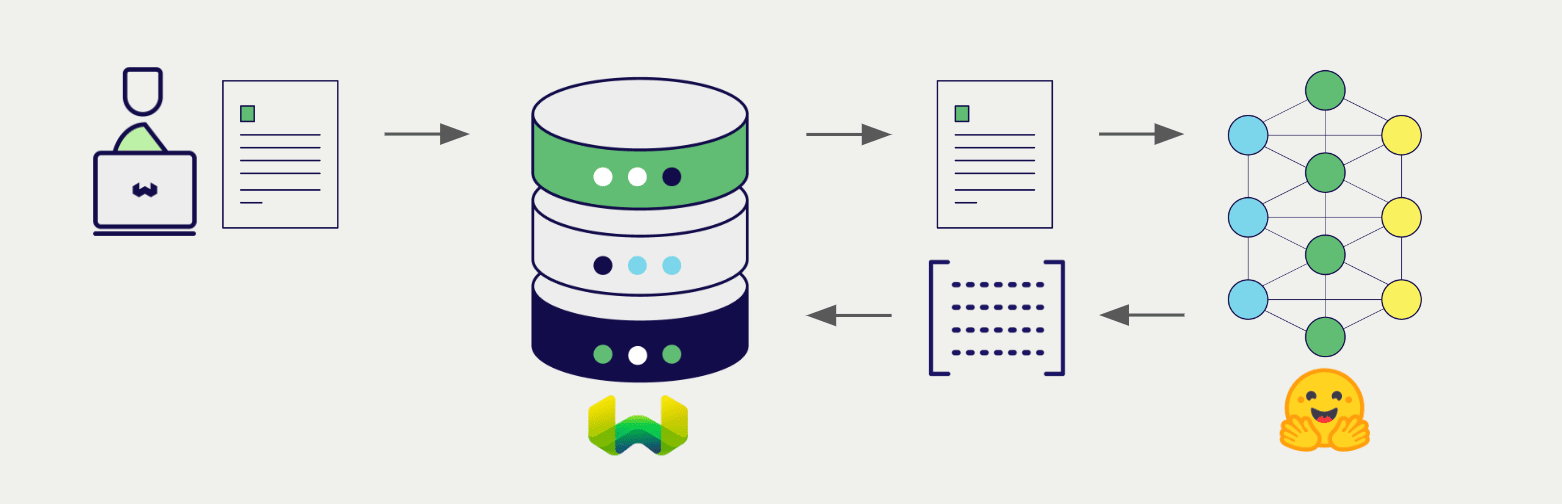

Weaviate's integration with the Hugging Face Transformers library allows you to access their CLIP models' capabilities directly from Weaviate.

Configure a Weaviate vector index to use the CLIP integration, and configure the Weaviate instance with a model image, and Weaviate will generate embeddings for various operations using the specified model in the CLIP inference container. This feature is called the vectorizer.

At import time, Weaviate generates multimodal object embeddings and saves them into the index. For vector and hybrid search operations, Weaviate converts queries of one or more modalities into embeddings. Multimodal search operations are also supported.

Requirements

Weaviate configuration

Your Weaviate instance must be configured with the CLIP multimodal vectorizer integration (multi2vec-clip) module.

For Weaviate Cloud (WCD) users

This integration is not available for Weaviate Cloud (WCD) serverless instances, as it requires spinning up a container with the Hugging Face model.

Enable the integration module

- Check the cluster metadata to verify if the module is enabled.

- Follow the how-to configure modules guide to enable the module in Weaviate.

Configure the integration

To use this integration, configure the container image of the CLIP model and the inference endpoint of the containerized model.

The following example shows how to configure the CLIP integration in Weaviate:

- Docker

- Kubernetes

Docker Option 1: Use a pre-configured docker-compose.yml file

Follow the instructions on the Weaviate Docker installation configurator to download a pre-configured docker-compose.yml file with a selected model

Docker Option 2: Add the configuration manually

Alternatively, add the configuration to the docker-compose.yml file manually as in the example below.

services:

weaviate:

# Other Weaviate configuration

environment:

CLIP_INFERENCE_API: http://multi2vec-clip:8080 # Set the inference API endpoint

multi2vec-clip: # Set the name of the inference container

image: cr.weaviate.io/semitechnologies/multi2vec-clip:sentence-transformers-clip-ViT-B-32-multilingual-v1

environment:

ENABLE_CUDA: 0 # Set to 1 to enable

CLIP_INFERENCE_APIenvironment variable sets the inference API endpointmulti2vec-clipis the name of the inference containerimageis the container imageENABLE_CUDAenvironment variable enables GPU usage

Set image from a list of available models to specify a particular model to be used.

Configure the Hugging Face Transformers integration in Weaviate by adding or updating the multi2vec-clip module in the modules section of the Weaviate Helm chart values file. For example, modify the values.yaml file as follows:

modules:

multi2vec-clip:

enabled: true

tag: sentence-transformers-clip-ViT-B-32-multilingual-v1

repo: semitechnologies/multi2vec-clip

registry: cr.weaviate.io

envconfig:

enable_cuda: true

See the Weaviate Helm chart for an example of the values.yaml file including more configuration options.

Set tag from a list of available models to specify a particular model to be used.

Credentials

As this integration runs a local container with the CLIP model, no additional credentials (e.g. API key) are required. Connect to Weaviate as usual, such as in the examples below.

- Python API v4

- JS/TS API v3

Configure the vectorizer

Configure a Weaviate index as follows to use a CLIP embedding model:

- Python API v4

- JS/TS API v3

from weaviate.classes.config import Configure, DataType, Multi2VecField, Property

client.collections.create(

"DemoCollection",

properties=[

Property(name="title", data_type=DataType.TEXT),

Property(name="poster", data_type=DataType.BLOB),

],

vectorizer_config=[

Configure.NamedVectors.multi2vec_clip(

name="title_vector",

# Define the fields to be used for the vectorization - using image_fields, text_fields, video_fields

image_fields=[

Multi2VecField(name="poster", weight=0.9)

],

text_fields=[

Multi2VecField(name="title", weight=0.1)

]

)

],

# Additional parameters not shown

)

await client.collections.create({

name: 'DemoCollection',

properties: [

{

name: 'title',

dataType: 'text' as const,

},

{

name: 'poster',

dataType: 'blob' as const,

},

],

vectorizers: [

weaviate.configure.vectorizer.multi2VecClip({

name: 'title_vector',

imageFields: [

{

name: 'poster',

weight: 0.9,

},

],

textFields: [

{

name: 'title',

weight: 0.1,

},

],

}),

],

// Additional parameters not shown

});

To chose a model, select the container image that hosts it.

Vectorization behavior

Weaviate follows the collection configuration and a set of predetermined rules to vectorize objects.

Unless specified otherwise in the collection definition, the default behavior is to:

- Only vectorize properties that use the

textortext[]data type (unless skipped) - Sort properties in alphabetical (a-z) order before concatenating values

- If

vectorizePropertyNameistrue(falseby default) prepend the property name to each property value - Join the (prepended) property values with spaces

- Prepend the class name (unless

vectorizeClassNameisfalse) - Convert the produced string to lowercase

Vectorizer parameters

Inference URL parameters

Optionally, if your stack includes multiple inference containers, specify the inference container(s) to use with a collection.

If no parameters are specified, the default inference URL from the Weaviate configuration is used.

Specify inferenceUrl for a single inference container.

- Python API v4

- JS/TS API v3

from weaviate.classes.config import Configure, DataType, Multi2VecField, Property

client.collections.create(

"DemoCollection",

properties=[

Property(name="title", data_type=DataType.TEXT),

Property(name="poster", data_type=DataType.BLOB),

],

vectorizer_config=[

Configure.NamedVectors.multi2vec_clip(

name="title_vector",

# Define the fields to be used for the vectorization - using image_fields, text_fields, video_fields

image_fields=[

Multi2VecField(name="poster", weight=0.9)

],

text_fields=[

Multi2VecField(name="title", weight=0.1)

],

# inference_url="<custom_clip_url>"

)

],

# Additional parameters not shown

)

await client.collections.create({

name: 'DemoCollection',

properties: [

{

name: 'title',

dataType: 'text' as const,

},

{

name: 'poster',

dataType: 'blob' as const,

},

],

vectorizers: [

weaviate.configure.vectorizer.multi2VecClip({

name: 'title_vector',

imageFields: [

{

name: 'poster',

weight: 0.9,

},

],

textFields: [

{

name: 'title',

weight: 0.1,

},

],

// inferenceUrl: '<custom_clip_url>'

}),

],

});

Data import

After configuring the vectorizer, import data into Weaviate. Weaviate generates embeddings for the objects using the specified model.

- Python API v4

- JS/TS API v3

collection = client.collections.get("DemoCollection")

with collection.batch.fixed_size(batch_size=200) as batch:

for src_obj in source_objects:

poster_b64 = url_to_base64(src_obj["poster_path"])

weaviate_obj = {

"title": src_obj["title"],

"poster": poster_b64 # Add the image in base64 encoding

}

# The model provider integration will automatically vectorize the object

batch.add_object(

properties=weaviate_obj,

# vector=vector # Optionally provide a pre-obtained vector

)

const collectionName = 'DemoCollection'

const myCollection = client.collections.use(collectionName)

let multiModalObjects = []

for (let mmSrcObject of mmSrcObjects) {

multiModalObjects.push({

title: mmSrcObject.title,

poster: mmSrcObject.poster, // Add the image in base64 encoding

});

}

// The model provider integration will automatically vectorize the object

const mmInsertResponse = await myCollection.data.insertMany(dataObjects);

console.log(mmInsertResponse);

If you already have a compatible model vector available, you can provide it directly to Weaviate. This can be useful if you have already generated embeddings using the same model and want to use them in Weaviate, such as when migrating data from another system.

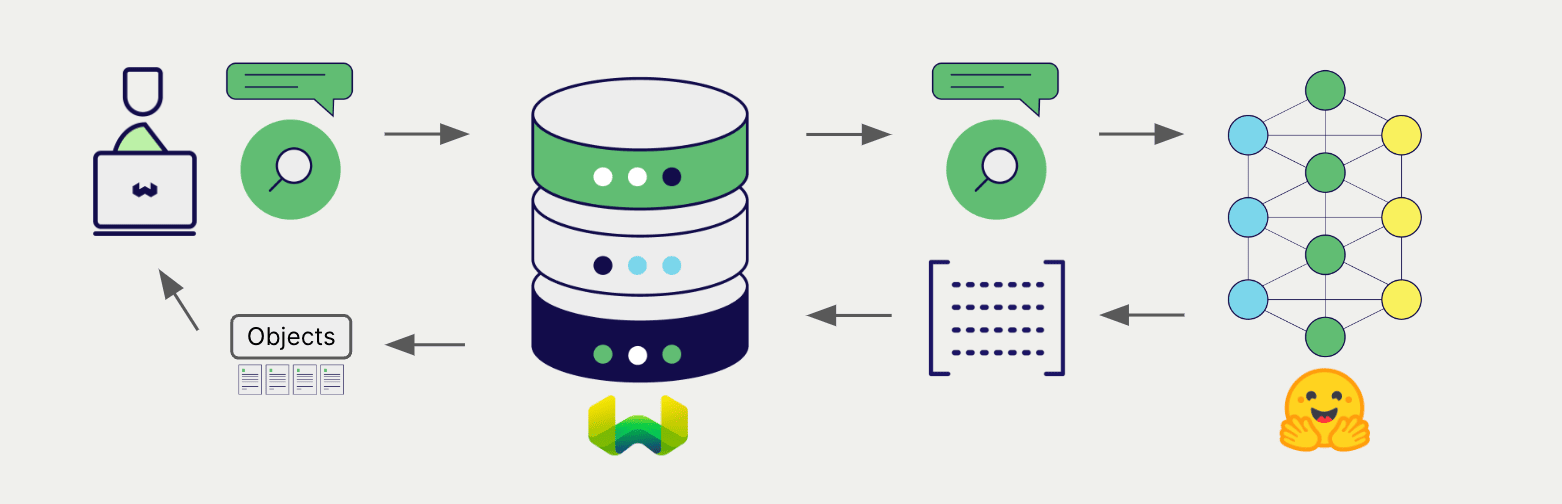

Searches

Once the vectorizer is configured, Weaviate will perform vector and hybrid search operations using the specified CLIP model.

Vector (near text) search

When you perform a vector search, Weaviate converts the text query into an embedding using the specified model and returns the most similar objects from the database.

The query below returns the n most similar objects from the database, set by limit.

- Python API v4

- JS/TS API v3

collection = client.collections.get("DemoCollection")

response = collection.query.near_text(

query="A holiday film", # The model provider integration will automatically vectorize the query

limit=2

)

for obj in response.objects:

print(obj.properties["title"])

const collectionName = 'DemoCollection'

const myCollection = client.collections.use(collectionName)

let result;

result = await myCollection.query.nearText(

'A holiday film', // The model provider integration will automatically vectorize the query

{

limit: 2,

}

)

console.log(JSON.stringify(result.objects, null, 2));

Hybrid search

A hybrid search performs a vector search and a keyword (BM25) search, before combining the results to return the best matching objects from the database.

When you perform a hybrid search, Weaviate converts the text query into an embedding using the specified model and returns the best scoring objects from the database.

The query below returns the n best scoring objects from the database, set by limit.

- Python API v4

- JS/TS API v3

collection = client.collections.get("DemoCollection")

response = collection.query.hybrid(

query="A holiday film", # The model provider integration will automatically vectorize the query

limit=2

)

for obj in response.objects:

print(obj.properties["title"])

const collectionName = 'DemoCollection'

const myCollection = client.collections.use(collectionName)

result = await myCollection.query.hybrid(

'A holiday film', // The model provider integration will automatically vectorize the query

{

limit: 2,

}

)

console.log(JSON.stringify(result.objects, null, 2));

Vector (near media) search

When you perform a media search such as a near image search, Weaviate converts the query into an embedding using the specified model and returns the most similar objects from the database.

To perform a near media search such as near image search, convert the media query into a base64 string and pass it to the search query.

The query below returns the n most similar objects to the input image from the database, set by limit.

- Python API v4

- JS/TS API v3

def url_to_base64(url):

import requests

import base64

image_response = requests.get(url)

content = image_response.content

return base64.b64encode(content).decode("utf-8")

collection = client.collections.get("DemoCollection")

query_b64 = url_to_base64(src_img_path)

response = collection.query.near_image(

near_image=query_b64,

limit=2,

return_properties=["title", "release_date", "tmdb_id", "poster"] # To include the poster property in the response (`blob` properties are not returned by default)

)

for obj in response.objects:

print(obj.properties["title"])

const base64String = 'SOME_BASE_64_REPRESENTATION';

result = await myCollection.query.nearImage(

base64String, // The model provider integration will automatically vectorize the query

{

limit: 2,

}

)

console.log(JSON.stringify(result.objects, null, 2));

References

Available models

Lists of pre-built Docker images for this integration are below.

| Model Name | Image Name | Notes |

|---|---|---|

| sentence-transformers-clip-ViT-B-32 | cr.weaviate.io/semitechnologies/multi2vec-clip:sentence-transformers-clip-ViT-B-32 | Texts must be in English. (English, 768d) |

| sentence-transformers-clip-ViT-B-32-multilingual-v1 | cr.weaviate.io/semitechnologies/multi2vec-clip:sentence-transformers-clip-ViT-B-32-multilingual-v1 | Supports a wide variety of languages for text. See sbert.net for details. (Multilingual, 768d) |

| openai-clip-vit-base-patch16 | cr.weaviate.io/semitechnologies/multi2vec-clip:openai-clip-vit-base-patch16 | The base model uses a ViT-B/16 Transformer architecture as an image encoder and uses a masked self-attention Transformer as a text encoder. |

| ViT-B-16-laion2b_s34b_b88k | cr.weaviate.io/semitechnologies/multi2vec-clip:ViT-B-16-laion2b_s34b_b88k | The base model uses a ViT-B/16 Transformer architecture as an image encoder trained with LAION-2B dataset using OpenCLIP. |

| ViT-B-32-quickgelu-laion400m_e32 | cr.weaviate.io/semitechnologies/multi2vec-clip:ViT-B-32-quickgelu-laion400m_e32 | The base model uses a ViT-B/32 Transformer architecture as an image encoder trained with LAION-400M dataset using OpenCLIP. |

| xlm-roberta-base-ViT-B-32-laion5b_s13b_b90k | cr.weaviate.io/semitechnologies/multi2vec-clip:xlm-roberta-base-ViT-B-32-laion5b_s13b_b90k | Uses ViT-B/32 xlm roberta base model trained with the LAION-5B dataset using OpenCLIP. |

We add new model support over time. For a complete list of available models, see the Docker Hub tags for the multi2vec-clip container.

Advanced configuration

Run a separate inference container

As an alternative, you can run the inference container independently from Weaviate. To do so, follow these steps:

- Enable

multi2vec-clipand omitmulti2vec-clipcontainer parameters in your Weaviate configuration - Run the inference container separately, e.g. using Docker, and

- Use

CLIP_INFERENCE_APIorinferenceUrlto set the URL of the inference container.

For example, run the container with Docker:

docker run -itp "8000:8080" semitechnologies/multi2vec-clip:sentence-transformers-clip-ViT-B-32-multilingual-v1

Then, set CLIP_INFERENCE_API="http://localhost:8000". If Weaviate is part of the same Docker network, as a part of the same docker-compose.yml file, you can use the Docker networking/DNS, such as CLIP_INFERENCE_API=http://multi2vec-clip:8080.

Further resources

Other integrations

Code examples

Once the integrations are configured at the collection, the data management and search operations in Weaviate work identically to any other collection. See the following model-agnostic examples:

- The how-to: manage data guides show how to perform data operations (i.e. create, update, delete).

- The how-to: search guides show how to perform search operations (i.e. vector, keyword, hybrid) as well as retrieval augmented generation.

Model licenses

Each of the compatible models has its own license. For detailed information, review the license for the model you are using in the Hugging Face Model Hub.

It is your responsibility to evaluate whether the terms of its license(s), if any, are appropriate for your intended use.

External resources

- Hugging Face Model Hub

Questions and feedback

If you have any questions or feedback, let us know in the user forum.